🎯 Quick Answer

If you've started exploring local AI — maybe running a private model on your laptop or setting up an OpenClaw agent on your own hardware — you've almost certainly run into file names like Q4_K_M.gguf.

Quantization is the reason those file names exist, and it's also the reason you can run a capable AI model on a normal computer at all. Without it, you'd need the kind of GPU rack that lives in a data center. With it, a model that used to require 14 GB of memory now fits in roughly 4 GB and runs comfortably on a five-year-old laptop.

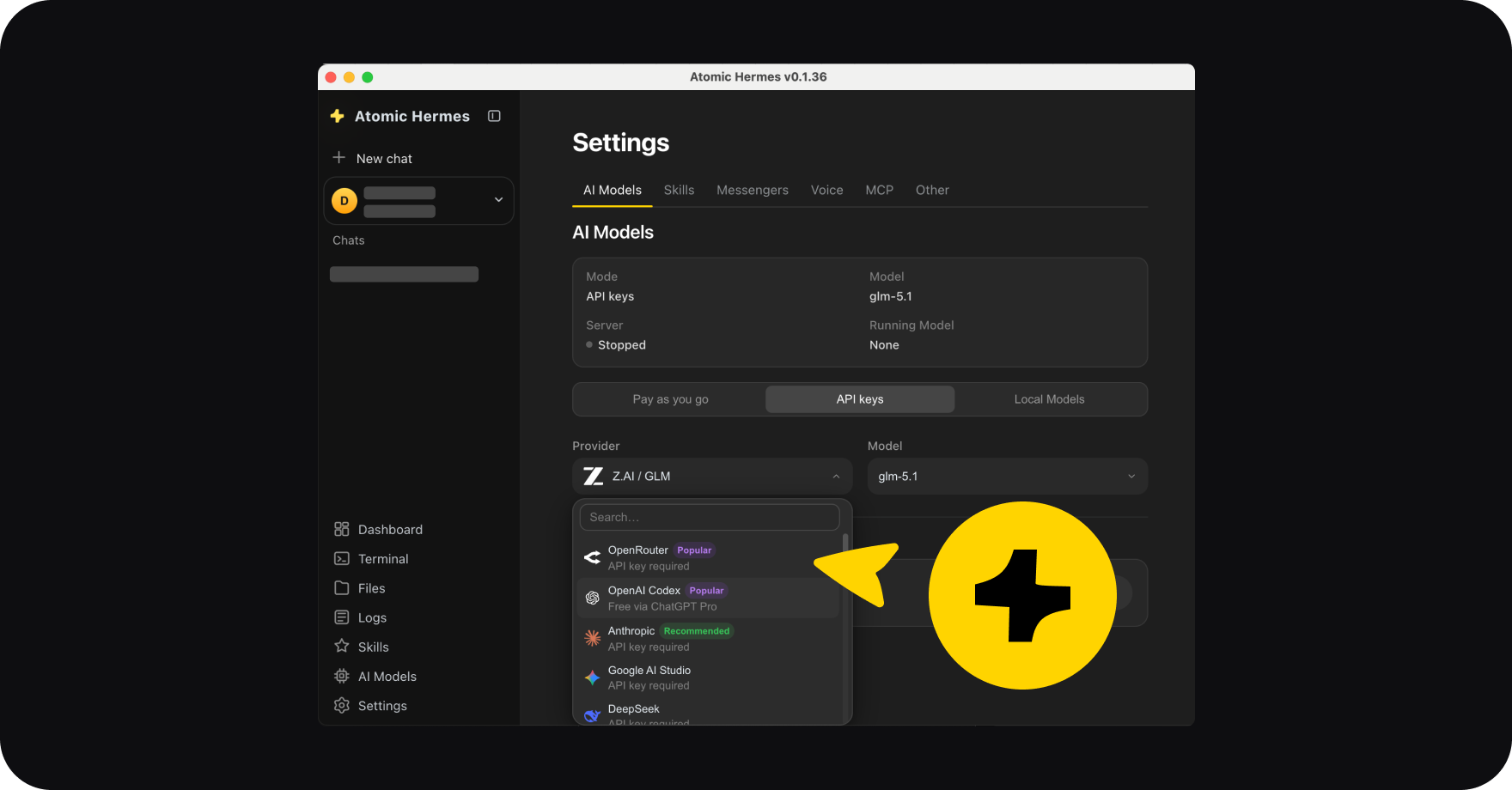

Atomic Chat handles all of this automatically, so you can run smart models on modest hardware without ever having to pick a quantization level.

But if you still want to understand what's happening under the hood, this guide walks through what quantization actually does, what the Q numbers mean, what GGUF is, and which version you should download.

🧠 How Quantization Works

An AI model is essentially a giant pile of numbers (weights), and a modern model has billions of them. By default, each weight takes up 16 bits of memory in a format called FP16. That adds up fast — a model with 7 billion parameters comes out to around 14 GB just to store the weights, before you've even loaded it into memory to use it.

Quantization compresses those weights into fewer bits per number. Instead of 16 bits per weight, you use 8, or 4, or in extreme cases even 2. The math behind it gets technical, but the practical result is simple: the file gets dramatically smaller, and the model still produces output that's nearly identical to the original.

The closest everyday parallel is what happens when you save a photo as a JPEG instead of keeping it as a RAW file. The JPEG is a fraction of the size, and for normal viewing you can't tell the difference, even though some of the fine detail has technically been thrown away. Quantization does something similar to a model — it strips out precision that the model doesn't really need, and what's left is good enough that you'd struggle to notice the loss in normal use.

In practical terms, here's what you get out of it:

- A 7B model drops from around 14 GB to roughly 4 GB at Q4

- RAM requirements fall to a level where 8 GB and 16 GB machines can run serious models

- Inference speeds up because there's less data for the CPU and GPU to move around

- You can fit larger and smarter models on the hardware you already own

🔢 Q4, Q5, Q8: What the Numbers Mean

The naming convention is simpler than it looks at first. The number after the Q is just how many bits each weight gets after compression. Q8 means 8 bits per weight, Q4 means 4 bits, and so on. Lower numbers mean smaller files and faster inference, but they also mean more aggressive compression and a bigger gap from the original quality.

The pattern in the table is the part worth remembering. As you move from Q8 down to Q4, you cut the file size almost in half but lose only a few percent of the model's quality. Drop below Q4, and the losses start piling up quickly — by Q2, the model is noticeably dumber and prone to mistakes that the full-precision version would never make.

For most people, Q4_K_M lands in the sweet spot. It's about a quarter of the original size and retains roughly 95% of the original model's quality, all while running comfortably on a machine with 16 GB of RAM. That's why it shows up as the default recommendation almost everywhere you look.

📦 What Is GGUF?

GGUF stands for GPT-Generated Unified Format, and it's the standard file format for quantized models in the local AI world. It came out of the llama.cpp project, which is the inference engine that most local AI tools rely on under the hood. A GGUF file packages the model weights, the tokenizer, and the metadata into a single portable file you can move between machines without breaking anything.

The format was designed specifically for the kind of hardware most people actually have. It runs well on regular CPUs, it takes advantage of GPUs when one is available, and it's particularly well-tuned for Apple Silicon Macs. Practically every local AI tool worth using supports it, including Atomic Chat, LM Studio, Ollama, and Jan AI.

How to Read a GGUF File Name

Once you know what to look for, the file names start making sense. Take llama-4-8b-instruct-Q4_K_M.gguf as an example.

Going piece by piece:

- llama-4 tells you the model family and version, in this case Meta's Llama 4

- 8b means the model has 8 billion parameters

- instruct means it's been fine-tuned to follow instructions and hold conversations rather than just predict text

- Q4 is the quantization level, so 4 bits per weight

- K_M refers to the K-quant strategy at Medium precision, which selectively keeps more bits on the layers that matter most for quality

- .gguf is the file extension

The single letter at the end of the quantization tag controls how aggressively the less important layers get compressed. M stands for Medium and is the default that almost everyone should use. The other options (S for Small and L for Large) trade size against quality in either direction, but the difference is small enough that most users never bother switching.

⚖️ GPTQ, AWQ, and GGUF: Which Format to Choose?

GGUF isn't the only quantization format out there, but it's the one you'll use almost all the time when running models on your own computer. The other two names you'll occasionally see are GPTQ and AWQ, and each exists for a specific use case rather than general local use.

GPTQ was built specifically for NVIDIA GPU inference. It pushes higher throughput than GGUF when you're running on a beefy graphics card, but it's less flexible and doesn't perform well on CPUs or Apple Silicon.

AWQ stands for Activation-aware Weight Quantization. It's a more sophisticated compression approach that preserves quality slightly better than GPTQ for tasks like coding and creative writing. Like GPTQ, it's mainly aimed at GPU servers rather than personal machines.

The simple rule is this: if you're running AI on your own computer, use GGUF. The other formats matter when you're deploying models on a GPU server or building a production inference pipeline, but for a normal laptop or desktop you'll never need to touch them.

🎚️ Which Quantization Level Should You Pick?

The right answer depends almost entirely on how much RAM you have to work with.

- If you have 32 GB of RAM or more, you can comfortably run Q8_0 for the highest possible quality. You could also step up to a larger model at Q5_K_M and still have room to spare. Either option will give you output that's essentially indistinguishable from the original full-precision model.

- If you have 16 GB of RAM, Q4_K_M is the default for good reason. It fits in memory without straining your system, and the quality drop from the original is small enough that you'd have to look hard to find it.

- If you're working with 8 GB of RAM, Q4_K_M still works, but you'll want to pair it with a smaller model like Phi-4 Mini or Gemma 3 4B rather than trying to squeeze a 7B model in. A smaller model at a good quantization level will beat a bigger model at an aggressive one almost every time.

- If you can barely fit anything, you can drop down to Q3_K_M to make things run, but expect the quality to take a real hit. At that point it's usually worth asking whether you'd be better off with a smaller model at Q4 instead.

And if you're not sure where you fall? Pick Q4_K_M. It's the default for a reason, and it's almost always the right call.

⚡ Atomic Chat: Quantization Without the Complexity

Everything you've just read is useful background, but in reality most people don't actually want to think about quantization levels and K-quant strategies and RAM math when they sit down to use AI assistants like OpenClaw, which are already quite difficult to set up even before you add the local AI component.

That's the whole point of Atomic Chat. It looks at the hardware you're running on, picks the right model and quantization level for your machine, downloads it, and gets you chatting in a couple of clicks. There's no file names to decode and no second-guessing whether you should have grabbed Q4 or Q5 — Atomic Chat just hands you a model that already fits your device.

It's also the simplest way to run a local OpenClaw setup. You get a private AI agent that lives entirely on your own computer, with the model already optimized for the hardware it's running on, and none of the underlying complexity ever shows up in your way.

Want the full picture on running AI locally? Read our complete guide on how to run AI locally →

→ See which models and quantization levels work best for your setup

Quantization is the quiet bit of engineering that makes local, private AI practical for normal people on normal hardware. Q4_K_M is the default you'll see almost everywhere, GGUF is the format that holds it all together, and tools like Atomic Chat make the entire process invisible so you can skip straight to actually using the model.

.svg)

.webp)