Local AI means running artificial intelligence directly on your own device — your Mac, PC, or server — instead of sending your data to cloud services like ChatGPT, Google Gemini, or Claude.

Why does this matter? Three reasons: privacy, control, and cost.

When you use ChatGPT, every message, every document, every question goes through OpenAI's servers. If you're processing client emails, financial documents, medical notes, or anything confidential — you probably don't want that data sitting on someone else's infrastructure.

Local AI, on the other hand, keeps everything on your machine. So how do you run it?

Here's the quick breakdown of how to run AI locally on your Mac:

If you want a local AI assistant that is easy to set up and can actually do real-world tasks on your machine — Atomic Bot installs OpenClaw in one click and runs it locally on your Mac or PC.

🤔 What Is Local AI?

Local AI is any artificial intelligence system that runs entirely on your own laptop, desktop, phone, or private server — without sending data to external cloud services.

When you talk to ChatGPT, here's what happens behind the scenes:

- You type a message

- It travels over the internet to OpenAI's data centers

- Their servers process it

- The response travels back to you

During this time, there’s a moment when OpenAI is in possession of your data, and they may log and store your conversation to train future models or collect information about you.

When you run AI locally, here's what happens:

- You type a message

- Your own CPU/GPU processes it

- You get a response

In this case, nothing ever leaves your machine.

There's a trade-off. Cloud AI (GPT-5.4, Claude Opus 4.6, Gemini 3.1 Pro) runs on enormous server farms with thousands of high-end GPUs.

Local AI runs on your hardware, which probably isn’t enterprise-grade, locking you to using smaller models.

But here's the thing: local AI in 2026 is shockingly good. Models like Llama 3.3, Mistral, DeepSeek, and Gemma run smoothly on a MacBook with Apple Silicon and deliver quality that would've been bleeding-edge just two years ago.

🔐 Benefits of Local AI

1. Privacy

This is the #1 reason people go local — it’s completely private and it’s the only 100% sure way to ensure that nobody but you sees your conversations.

2. No subscription costs

Most cloud AI subscriptions cost about $20/month, but with local AI you can download an open source model and run it for free.

3. Offline access

Local AI works completely offline, so you can use your AI assistant while on a plane, on a train, or in a subway tunnel.

4. Customization

You can fine-tune AI models that run locally on your machine and improve their performance for your particular tasks.

5. No downtime

Servers run by major AI providers go down from time to time, which means you may have to wait for maintenance before you can access your AI assistant. With a locally running LLM, that’s usually not an issue—once it’s set up, it tends to keep working without interruptions.

🧠 Best Local AI Models in 2026

You might be wondering which AI model you should run locally, given the wide range of options from different providers. Here’s a quick overview of what we recommend installing to get started:

If you’re not sure where to start and just want one solid option, we’d recommend the Qwen 3 / Qwen 3.5 model family.

Its performance-to-size ratio is unusually strong, which means you can run a capable local AI assistant even on modest hardware.

💻 Can Your Laptop Run AI Locally?

Short answer: yes — especially if you have Apple Silicon (M1 or later).

Here’s why: Apple's M-series chips feature a unified memory architecture, where the CPU and GPU share the same pool of RAM. This means that a model that requires 16GB of memory can run on a MacBook Pro with 16GB of unified memory, without a discrete GPU.

For PC users, you’ll want a GPU with at least 8 gigabytes of integrated memory. Gaming-style setups are best suited for local AI.

Here's a rough guide to what your laptop or desktop can handle:

The sweet spot for most people in 2026: 18–36GB of RAM for ARM systems or the same amount of discrete GPU memory on the PC side of things.

With that amount of memory you can run 7B–13B models comfortably with great quality and fast inference.

🤖 How to Actually Run a Local AI Agent

There's an important distinction worth making.

Most local AI tools — Ollama, LM Studio, MLX — give you a chat interface, which is similar to ChatGPT, but they’re not real AI agents.

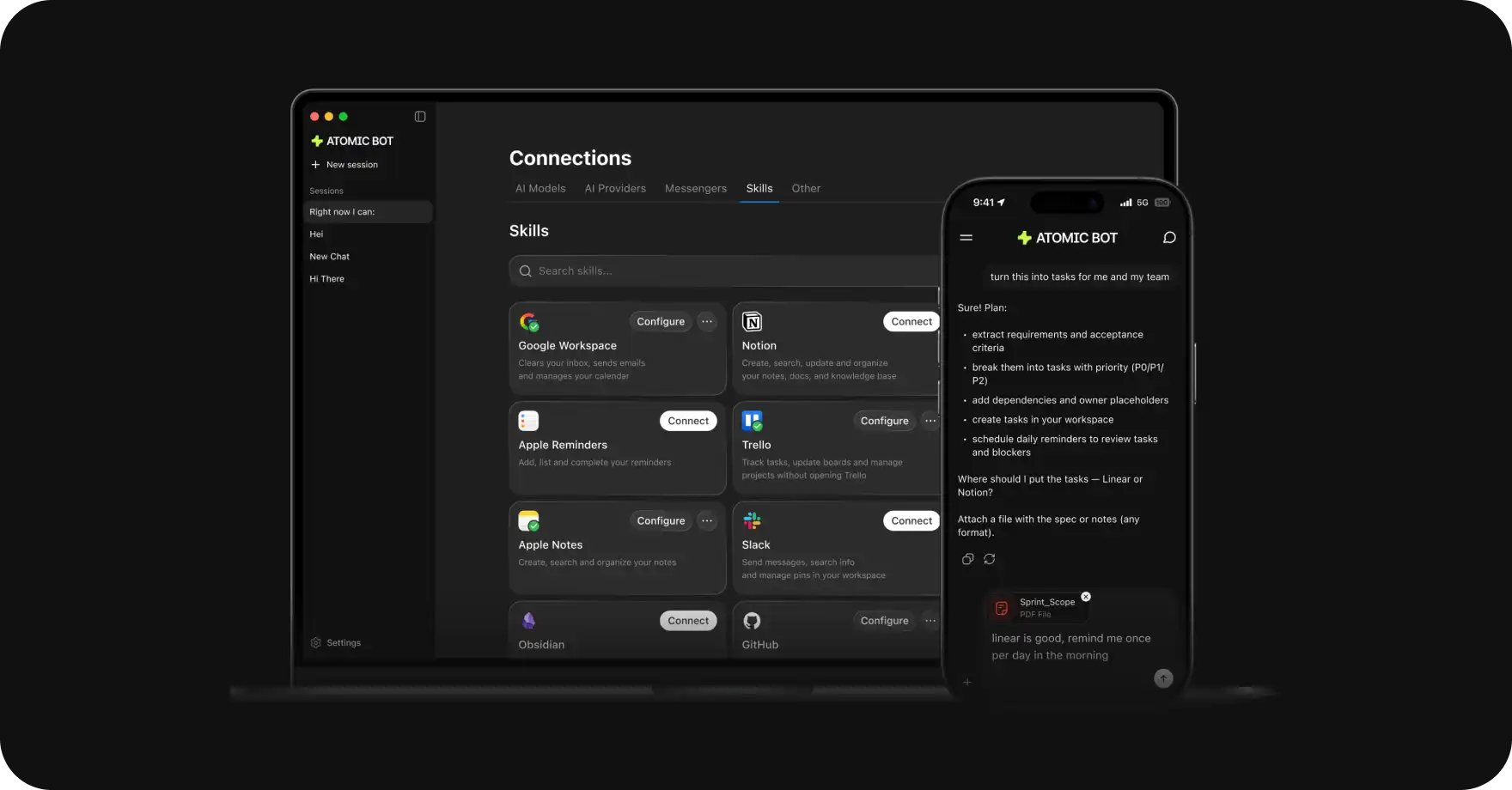

To run a real AI agent locally, you need OpenClaw — a true personal AI assistant, designed to actually do things on your Mac, such as send emails, manage your calendar, browse the web, organize files, and run automations.

And the easiest way to set up OpenClaw on your Mac is with Atomic Bot.

Atomic Bot is a macOS and Windows app that installs OpenClaw in one click.

To run it in local configuration, you just need:

- 8GB RAM or discreet GPU memory

- 5 minutes

Here’s how to install it:

Step 1: Download Atomic Bot

- Go to atomicbot.ai

- Click Download for Mac (or Dowload for Windows, for all the PC folks)

- Double click on the executable file

Step 2: Install OpenClaw

- Open Atomic Bot

- It Installs OpenClaw automatically and helps you configure everything

- Done ✅

Step 3: Connect Your Chat Interface

Atomic Bot lets you control OpenClaw through the messaging app you already use:

- Telegram (most popular)

- iMessage

- Discord

Pick one, follow the 30-second setup wizard.

❓ FAQ

What is local AI?

Local AI is artificial intelligence that runs directly on your own device (Mac, PC, server) instead of relying on cloud services.

Can I run AI on a MacBook Air?

Yes, if it has Apple Silicon (M1 or later). An M1 MacBook Air with 8GB RAM can run small models (3B–7B parameters) for basic tasks like chat, summarization, and simple code generation.

Is local AI free-to-use?

Yes, the software and models are free. You need a Mac with Apple Silicon (which you may already own). The only other expense to consider is electricity costs, but Macs are power-efficient so these will be negligible (~$2–5/month).

What's the best local AI model for Mac?

For most users: Llama 8B. It's the best all-rounder — fast, capable, and runs on 8GB+ RAM. For coding: DeepSeek Coder V2. For low-RAM Macs, consider the Phi-3 Mini.

What's the difference between Ollama and Atomic Bot?

Ollama runs local language models for chat and text generation. Atomic Bot installs OpenClaw — a full AI agent that takes action (email, calendar, files, web browsing, automation).

🏁 Bottom Line

Local AI models are now genuinely good enough to replace cloud tools for most everyday tasks — especially if privacy matters to you.

And especially if you're on Apple Silicon, you've already got the hardware. But if you want a local AI that actually does things — install Atomic Bot and get OpenClaw running in 2 minutes Your data stays on your machine. You get the most powerful AI assistant.

.svg)

.webp)